Age‑Checking Tech Expands Amid Global Safety Push

- Governments worldwide are adopting stricter age‑verification rules for social platforms and online services.

- Advances in AI‑driven age‑assurance tools are making large‑scale checks more feasible and affordable.

- Early results from Australia’s teen social media ban are shaping international policy discussions.

Rising Pressure for Stronger Online Age Controls

A growing number of governments are embracing age‑verification mandates after years of resistance from major tech companies. Officials who once accepted arguments about technical limitations now believe the tools are mature enough to support broad regulation. Australia’s recent ban on social media accounts for minors has accelerated this shift, prompting interest from regulators in Europe, Brazil and several U.S. states. Political leaders across the spectrum are signaling support, reflecting heightened concern about online abuse, mental health risks and the spread of AI‑generated child sexual imagery.

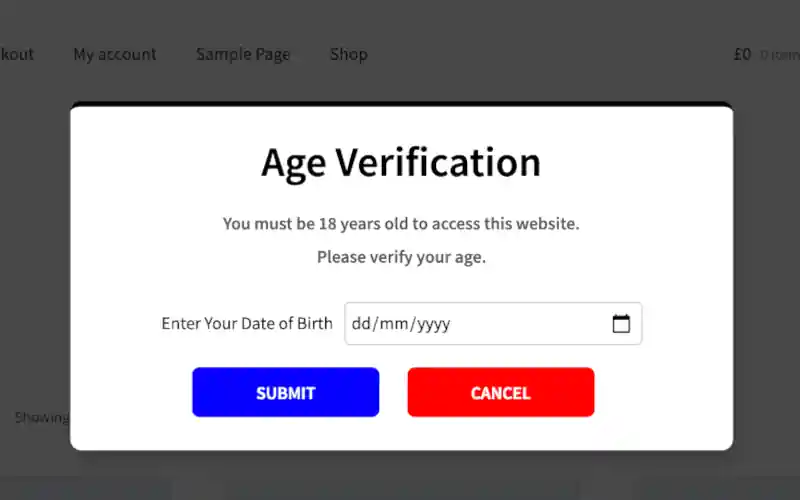

Advocates argue that age‑assurance technology has advanced significantly, making it possible to estimate a user’s age with increasing accuracy. Improvements in facial analysis, identity verification and parental‑approval workflows have contributed to this shift. Trade groups and certification schemes have also emerged, helping standardize how these tools are evaluated. These developments have encouraged policymakers to consider more aggressive approaches to youth safety online.

Tech companies, once reluctant to implement such systems, now face mounting regulatory pressure. Many platforms already collect behavioral data that can be used to infer age ranges with reasonable confidence. Combined with third‑party verification tools, these signals form a multilayered approach to age checking. The result is a growing ecosystem of vendors offering solutions tailored to social networks, app stores and content‑restricted services.

Age‑Assurance Tools Gain Accuracy and Scale

Vendors such as Yoti, k‑ID and Persona have become central players in the age‑verification market. Their tools rely on a mix of facial analysis, government ID checks and machine‑learning models to estimate a user’s age. App‑store operators like Apple and Google have also introduced features that allow parents to share age‑range information with developers. These combined efforts have lowered the cost and increased the reliability of age‑assurance systems.

Industry researchers note that verification costs have dropped sharply as AI models have improved. Tasks that once required human review or complex data triangulation can now be performed automatically for a fraction of the price. Basic machine‑only checks often cost well under one dollar, and large‑scale deployments can reduce the cost to just a few cents per user. More intensive methods remain available but are used less frequently as automated tools become more accurate.

Independent evaluations support claims of rapid progress. A long‑running study by the U.S. National Institute of Standards and Technology shows that average age‑estimation errors have steadily decreased over the past decade. Yoti’s latest model, for example, reports an average error of just over one year for teens aged 14 to 18. Persona cites similar performance for the 13‑to‑17 age group, suggesting that the technology is approaching the precision needed for regulatory compliance.

Despite these gains, challenges remain. Age‑estimation systems can struggle with certain skin tones, low‑quality images or older smartphone cameras. Privacy‑focused on‑device processing, while appealing to regulators and users, can reduce accuracy further. Vendors also report that teens frequently attempt to bypass checks using masks, heavy makeup or even scanning toy figurines. These limitations mean that supplementary methods—such as ID verification or parental consent—are still recommended for borderline cases.

Early Lessons From Australia’s Teen Social Media Ban

Australia’s eSafety commissioner is collecting data to evaluate the impact of its teen social media ban, with initial findings expected later this year. Since the law took effect in December, companies have blocked or removed millions of suspected underage accounts. Meta reported taking down roughly 550,000 accounts across Instagram, Facebook and Threads, while Snapchat removed about 415,000. Some of these removals may reflect restrictions on underage Google accounts rather than active social media use, according to industry participants.

Regulators in Europe and the United Kingdom are closely monitoring Australia’s rollout. European Commission President Ursula von der Leyen is expected to discuss age‑verification policy during an upcoming visit to Canberra. The U.K., which already requires age checks for adult websites, is considering additional rules for social media and AI chatbots. These international exchanges suggest that Australia’s approach could influence broader regulatory trends.

However, early implementation has been uneven. Some age‑verification vendors say social media companies are complying only minimally with the new requirements. In certain cases, platforms reportedly asked vendors to disable features that would make checks more robust. Industry observers believe companies are wary of setting a precedent that could lead to widespread adoption of similar laws. This tension reflects the broader debate over balancing user privacy, platform responsibility and regulatory oversight.

Age‑verification debates are not new, but the rapid rise of generative AI has intensified scrutiny. Researchers warn that AI‑generated child sexual imagery is becoming easier to produce and distribute, increasing pressure on governments to act. At the same time, privacy advocates caution that age‑checking systems must avoid creating new risks, such as unnecessary data collection or biometric misuse. The coming years will likely determine how societies reconcile these competing priorities as digital identity tools continue to evolve.